The Case for Enterprises Moving to Cloud Data Warehouses

Cloud-based data warehouses have come back with renewed vitality. If one was to go back in time, in the early ‘80s, when mainframe computers were fairly expensive pieces of hardware to own, companies would go in for “time-sharing”. This essentially meant that such companies, at a scheduled hour, would dial into those “remote” computers to get their work done. When PCs became cheap, this stopped.

Data warehousing in the Cloud is similar to that time-sharing concept, but of course, far more advanced technologically. They are becoming more and more relevant and prevalent in today’s world of Big Data because of many reasons.

One of them is the need to create such hosted infrastructure to house that deluge of data pouring in literally every hour.

Traditional data warehouses are still around but a dipstick survey will reveal that in the last two years or so, Enterprises have started going in for Cloud replacements. Some surveys by leading research agencies, too, confirm this development.

The question, thus, to be asked is – why?

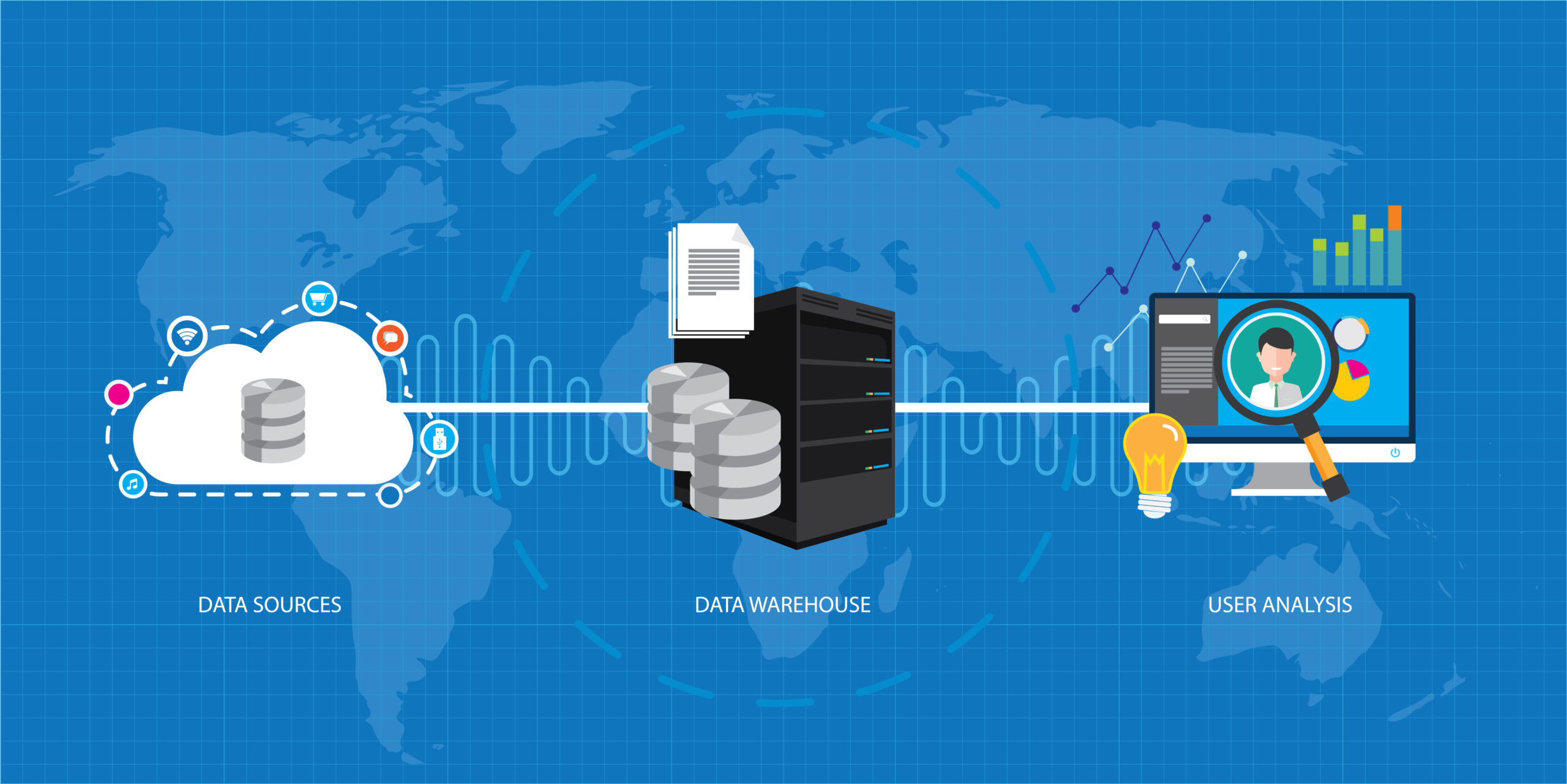

An electronic data warehouse scoops up multi-channel data, and then a company analyzes that data to support management decisions. Early versions of on-premise data warehouses were prohibitively expensive, took years to build, and because of their monolithic architecture, were not agile at all.

All of this meant a time lag between the data collected and is being analyzed. This often forced IT departments to wrestle with the question – maintain data quality or sacrifice some of it for business agility?

There’s also the question of data warehouse architecture itself at the heart of this conversation of traditional versus the Cloud. To put it rather simply, the traditional warehouses are built on a 3-layer structure, namely, bottom, middle and top, with its accompanying OLAP (Online Analytical Processing) server.

Today’s Cloud-based data warehouses do not follow that kind of rigid architecture at all; they are much for flexible. In fact, some tools like Google’s Big Query, a serverless data warehouse, allocate resources dynamically. Here, clients can upload data either from Google’s Cloud Storage or stream it in real-time.

BigQuery uses ‘Dremel’, which is a query execution engine using a columnar data structure. Dremel scans billions of rows of data in what is called the Colossus file management system.

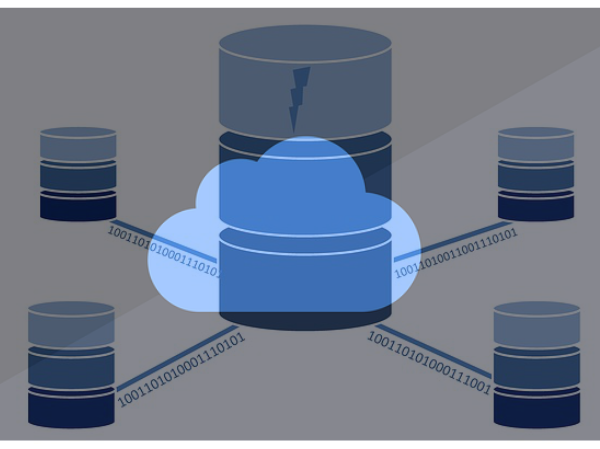

The latter distributes these files into pieces of 64 megabytes among ‘nodes’, which are then grouped into clusters. So essentially, this is a tree architecture sending queries to millions of machines.

The process of loading data into a warehouse itself has also got a counter today. The traditional method was ETL – Extract, Transform, Load – a tried and tested one that’s been around for over 20 years. It was the only way the data world knew of processing large volumes of data and preparing it for analysis.

But ETL’s supreme position was recently challenged by ELT, or the Extract, Load, Transform method.

Let’s quickly understand a basic difference:

In ETL or the traditional way, data is extracted from multiple sources and is then held in a temporary staging database. Here, data is structured and converted for the data warehouse. This is a very “structured” approach.

With the ELT method, data, once extracted, is straightaway loaded. The staging database level is eliminated. The data is instead transformed inside the data warehouse itself for analysis.

Each of them, ETL and ELT, comes with its own sets of pros and cons. But Enterprises, in today’s world of high infrastructure costs, are leaning more and more toward ELT. Plus, the ETL system often leads to cost overruns and can have scalability problems.

In fact, Enterprises are also increasingly leaning towards ELT because of the open-source software framework company Hadoop. The latter reduces storage and processing costs as compared with traditional data warehouses. This also comes as a major initiative for Enterprises these days, groaning under high infrastructure and licensing costs as they are.

Most deploy Hadoop at the warehouse level itself because as all of us know, a warehouse hosts the largest amount of data in any company, and is also computing intense. Overall, the 3 Vs of data as we know them – Volume, Variety, and Velocity – are making many Enterprises move to Hadoop ELT.

So there it is – the fight is on between the traditional data warehouses and today’s Cloud-based architectures that come with lower price tags, far better scalability, and of course, blazing performance. Let’s also not forget to add easy accessibility and interactions by teams because of the Internet.

Let’s take a closer look at all 3:

Pay when you use: That’s what Cloud-based data warehouses offer. Data-intensive Enterprises such as e-commerce Sites, for example, are almost left with no choice today but to move operations to Cloud-based warehouses.

Getting these kinds of businesses’ data processes automated and thus agile, in a cost-effective manner is a big plus. Enterprises pay when certain loads go up and downgrade accordingly.

Move up or down: Moving from manual to automating a Cloud data warehouse, IT teams can not only prototype but also rapidly release the final version of new analytic modules.

What it means is the IT team can fast-track new projects, maximizing an organization’s return on its Cloud investment. The ability that it retains of either being scaled up or down, depending on an Enterprises workload and the capacity to grow to an even petabytes scale makes the Cloud a better attraction.

Fast: The Azure SQL Data Warehouse, for example, delivers a rather dramatic query performance improvement. In addition, it also supports as many as 128 concurrent queries while being able to deliver five times more computing power compared to the previous product generation. That’s some serious speed.

What Enterprises Need to Ask themselves before Moving to the Cloud:

- Are we already part of the Cloud ecosystem like AWS or Google Cloud Services?

- How many queries do we really run? Is it 5 an hour or 5 a minute?

- How large is my data needed?

If for all three the answer is yes, switch over to the Cloud.

To sum up, Cloud-based data warehousing coupled with automation definitely expands an Enterprise’s ability to cope with Big Data but the move must be made only if it is required.

An Engine That Drives Customer Intelligence

Oyster is not just a customer data platform (CDP). It is the world’s first customer insights platform (CIP). Why? At its core is your customer. Oyster is a “data unifying software.”

Liked This Article?

Gain more insights, case studies, information on our product, customer data platform