With critical sectors such as autonomous driving and healthcare using AI and ML, data annotation accuracy has become crucial for these applications.

But speed and scale of annotation are also important for applications to roll out on time. And now we also have to handle advanced annotation, such as multimodal data labeling, and ethical considerations around bias and privacy have taken center stage.

The amount of data available to our AI models has grown hugely in 2026, but the trick is to know how much a model actually understands that data.

The success of our models is no longer about big data; it is about smart data. And that is where the role of annotation comes in.

Precise annotation is the need of the hour, as models require context-rich training data with minimal noise to improve performance.

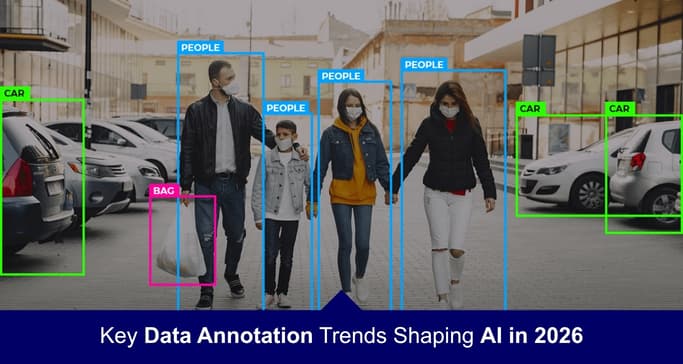

With rising expectations for AI, where autonomous systems and medical imaging require precision to the core, there is an urgent need to invest in emerging data annotation techniques such as bounding boxes, 3D cuboids, and semantic segmentation.

And of course, there needs to be synergy between technology and domain-specific human intervention to ensure contextual accuracy.

You need to shift to the rising trends to keep pace with advancing technology and adopt the new generation of data annotation practices.

Key Stats Supporting Emerging Annotation Trends

- 80% of AI effort goes into data preparation and quality, not modeling

Source: IBM

- 6% higher labeling accuracy with AI-assisted annotation.

Source: arXiv Research

- 60%+ of AI teams will use synthetic data, but hybrid datasets perform best

Source: Gartner

See how Customer Analytics can help you understand and predict customer behavior.

- Up to 15% improvement in decision confidence with human-AI collaboration

Source: Stanford HAI

Here, we discuss the top 5 emerging data annotation trends for 2026 that are must-follows.

Top 5 data annotation trends to follow in 2026

Keeping up to date with emerging data annotation technologies is essential for building successful and accurate AI models.

Trends in machine learning annotation must be followed. Here are 5 trends that will help you build accurate AI models.

Trend 1: AI-powered pre-labeling and model-assisted labeling (MAL)

2026 is all about AI, and you must invest in AI to leverage human expertise.

Keep in mind that AI is not meant to replace humans but to provide assistance in generating first-pass labels.

It is like you use AI to understand where humans should focus.

There was a time when bounding boxes, polygons, or masks were manually drawn by annotators, but today this practice is totally obsolete.

AI models are pre-trained to automatically generate first-pass annotations.

It is like AI doing the pre-work for you. And once the draft is ready, the annotator’s role comes into play, who now makes a judgment based on their domain expertise.

So human effort is used to improve the model, not actually to build it.

They may tighten the loose bounding box or correct inaccurate polygons. So, the work moves fast with fewer chances of any errors.

The overall quality of the annotation pipeline improves because each human correction fed into the system improves the pre-labeling model.

The annotation cycles get smaller; fewer corrections are needed, and annotations get more accurate.

Talking of the real world, this approach works effectively. For example, in autonomous driving, accuracy is critical.

It can’t just depend on automated labeling. The pre-labeling of vehicles, lanes, boundaries, and pedestrians requires human intervention to address scenarios such as erratic lighting or unpredictable road behavior.

Without human intervention, capturing road conditions and edge cases would not be possible.

The same applies to other industries, such as healthcare, which is again a critical field with no room for error.

AI can highlight tumors, but only a radiologist can validate the labels for complete accuracy.

While AI supports high-speed annotation at scale, it still cannot handle ambiguity.

For contextual accuracy and edge cases, human expertise is needed.

And gradually, this human feedback strengthens pre-labeling models and makes them more reliable.

With the increase in real-world complexities, edge cases, and rare conditions, AI-driven labeling methods are needed to create accurate models within the budget and timelines.

Trend 2: The rise of multimodal and sensor-fusion annotation

Today, models can understand environments better by concurrently using multiple data sources.

RGB images or plain text do not work, especially in robotics or autonomous driving. So, it is no longer about only efficiency; capability is equally important.

In advanced applications, annotators must handle a wide range of inputs.

There may be 2D camera images, or radar signals, and even 3D LiDAR point clouds up for labeling.

Visual appearance, depth, and motion all need to be annotated together.

AI models in industries need sensor-fusion annotation to interpret scenes, statuses, and situations.

In usual cases, a single sensor cannot capture the full picture. So, from labeling images, one needs to shift to labeling across sensors.

Annotation is no longer flat. Annotators need to work with objects that disappear, reappear, shift, move, or overlap.

It is no longer about 2D boxes; the annotators must handle 3D cuboids. The future of the annotation workforce needs specialized tools, knowledge, and skills.

The real-world applications are already shifting to multimodal and sensor-fusion annotation.

In autonomous driving, certain situations require multiple sensors for the system to operate.

Under conditions such as fog, low light, or heavy rain, a single sensor may fail.

Here, a combination of LiDAR, radar, and camera data helps models accurately detect objects.

Multimodal annotation is not only about adding extra data; it also creates contextually rich data that captures the complexity of real-life situations.

In healthcare, integrating imaging data with patient records is essential, and multimodal annotation supports this. This improves diagnosis and treatment recommendations.

In robotics, they need to understand human interaction more granularly and therefore rely on sensor-fusion data.

That helps them understand object movement and spatial environments.

Multimodal understanding is also important in 2026. Multimodal LLMs need to be trained to read, reason, and respond, which calls for annotators with hybrid expertise.

Annotators with an understanding of spatial geometry, motion dynamics, and contextual reasoning will make a difference.

So, investing in cross-trained annotation teams becomes a must as AI moves closer to broader real-world deployment, making multimodal and sensor-fusion annotation essential for building AI models.

Trend 3: Synthetic data validation and hybrid datasets

Synthetic data has been used for rapid AI training, but without validation, models trained solely on synthetic data tend to drift from real-world behavior.

Such AI models pass the testing phase but fail in deployment. So pure synthetic is not enough anymore.

But you don’t need to abandon synthetic data; all you have to do is add human validation to it.

To create synthetic labels that fit actual context and behavior, you need domain experts to review such annotations.

As synthetic data fills gaps in training data for rare, unique, or high-risk edge cases, validation also needs to be more rigorous.

So, we need to cultivate a hybrid approach where annotators don't spend time only drawing boxes, but move further.

They need knowledge of spatial realism and should be able to assess semantic correctness, validate data, and reject incorrect data. This data validation is the basis for building critical applications in industries such as robotics and geospatial intelligence.

Hybrid data is gaining importance in the current scenario. Often, situations are difficult to capture in real life.

In cases such as accidents or extreme weather, synthetic data is generated that is further validated against real-world driving data to ensure accuracy.

Healthcare is another example where synthetic data is used effectively, as it helps preserve patient privacy.

Synthetic satellite imagery works well for projects in defense and geospatial applications.

Synthetic data paves the way for training models to respond appropriately to real-world situations, even when field data is scarce.

So, the 2026 trends in machine learning annotation are about a hybrid approach that combines synthetic data with human validation.

Trend 4: Domain-specific "specialist" annotation

Understand how marketing analytics helps brands optimize campaigns, measure ROI, and drive better engagement.

We are in an age where it is not about technically correct labels; what we need are contextually correct labels.

Technically correct labels may not be right for the models to perform well. So, we need to move from generalist annotation to specialist annotation.

In fields like healthcare, automation, and finance, we need contextual annotations.

The trend to follow is Expert-in-the-loop (EITL) workflows that involve annotators with domain expertise.

Depending on the field of application, these annotation experts will also have parallel portfolios, such as radiologists, financial analysts, or even legal experts.

We now need annotators who can interpret much more complex patterns than we used to handle.

They need a grasp of edge cases and should know how to apply domain-specific rules.

Often, global regulations require that every detail be documented, including who performed the labeling, why a particular decision was made, and how bias was addressed.

Regulated industries are especially strict about such things, and compliance is difficult without expert annotators.

Domain expertise is a must in industries where subject knowledge is critical to effective model performance.

In healthcare, in certain diagnoses, clinical judgment is needed, which is only possible with someone with domain knowledge.

In healthcare, for example, not just any annotator is competent to distinguish between benign and malignant patterns.

You'd need an imaging domain, experts. Generic annotators may not have the relevant expertise to make that kind of critical judgment.

Another area where context and intent play an important role is the legal industry. The legal terminology must be understood well to avoid any misclassification.

So, again, domain experts play a role here in accurate annotation and model performance.

AI teams also leverage domain specialists' expertise to define guidelines, validate data, and understand edge cases.

All such issues must be fixed at the annotation stage itself and not later. So, domain specialists must identify patterns earlier.

Domain-specific annotation ensures all such compliance. So, there is a shift to knowledge-driven processes where AI accuracy is always expert-led.

Trend 5: The evolution of the annotation workforce

From repetitive task performers, annotators have grown into data critics, quality architects, and AI curators. Because in 2026, labeling is more about humans guiding model behavior than about volumes of datasets.

Their role has now shifted from simple labelers to evaluators of annotations.

As applications become more specific, domain-expert annotators become a mainstay of AI model reliability.

Their tasks now range from finding ambiguous samples or flagging bias cases to checking and approving outputs against benchmarks.

The trend is now more toward a hybrid approach, with automation and manual-driven annotation working together to ensure efficiency.

Quality is another issue the workforce cannot ignore, as annotation guidelines must adapt to changing data distributions.

Otherwise, the model will degrade or drift. Preventing drift, however, requires a stable workforce and a structured institutional memory for quality assessment to work effectively.

Data annotation programs are no longer only about annotators; they have become more structured and organized, with workflows that incorporate multiple skills and expertise.

Domain specialists, QC experts, auditors, and reviewers are all now part of the structured data annotation process.

Annotators no longer try to force any label; instead, they flag any issue for further review.

The annotation's contextual accuracy and alignment to real-world data are then reviewed.

Review feedback loops are then used to craft guidelines and training. However, experts are always scarce.

To address such challenges, most AI and ML companies are partnering with established organizations that can provide trained annotation teams, domain experts, governance processes, documented QA, and stable delivery models.

Thus, in 2026, framing the correct workforce strategy has become critical for AI development.

Conclusion

Data annotation in 2026 is no longer driven solely by volume. Annotation teams have now understood that without quality and accuracy, simply pushing high volumes of labeled datasets cannot fulfill objectives.

And that means you need annotators who have domain expertise and contextual understanding, and who are serious about validation and review.

With multimodal and sensor-fusion annotation entering the mix, such annotators have now become indispensable.

In such a complex environment, automation helps, but without human oversight and domain guidance, mindless automation can propagate or scale errors.

Businesses today need to take ethical responsibility and invest heavily in annotators that can get to the core of contextual understanding, tackle biases, and ensure compliance and scalability.

Skipping investments to bolster annotation quality and push volumes has been recognized as totally ineffective.

In 2026, the quality of annotators and experts training models will matter and determine the success of AI systems. Scenarios are changing, and trends are too. Without changing your approach, model performance may tank, so focus on quality.

Frequently Asked Questions

What are the top data annotation trends shaping AI in 2026?

Data annotation in 2026 is undergoing a huge shift from volume to training data quality, with stricter IAA and quality control. It is no longer about how large your training data is. It is more about contextually correct training data. AI-assisted labeling with human validation is being used to avoid bias and ensure contextually correct labeling. Multimodal annotation is also in high demand, along with domain-specific annotation. And lastly, there's renewed focus on data governance and compliance, with increased demand for datasets that meet all applicable standards and regulations.

How does AI-assisted labeling improve the data annotation process?

The automated labeling process has multiple benefits in improving the annotation process. Most importantly, it reduces manual effort and improves speed. The turnaround time improves significantly, as annotators only need to review the annotation rather than start from scratch. Since the manual cost is reduced, it also proves cost-effective and improves the ability to handle large volumes of data.

Why is multimodal and sensor-fusion annotation necessary for modern AI models?

As data complexity increases, there is a need to integrate multiple data types, including text, video, images, and sensor data. This is needed to capture real-world complexity and improve model robustness. The system uses multiple data sources to make accurate judgments and does not rely on a single one. Multimodal and sensor-fusion annotation enables the detection of edge cases and improves clarity. This cross-referencing of data increases the capacity of multimodal AI models and helps them meet user expectations.

Can AI models be trained effectively using only synthetic data?

Yes, models can be trained effectively using synthetic data, but with a few limitations. Synthetic data has its advantages; it can simulate edge-case scenarios that are hard to capture in real life. But there is always a risk of a domain gap, and it is always a good idea to use a hybrid approach that combines synthetic and real data. It is more effective in early training and testing.

Why is domain-specific specialist annotation important for regulated industries?

Specialized and sensitive industries such as medical, legal, and financial require domain expertise. Without subject knowledge, accuracy often gets compromised. Generic annotators often miss domain nuances. With domain experts, there are fewer chances of critical errors creeping in, thereby improving model reliability and credibility. Often, complex data requires correct interpretation, and that is possible only by someone with subject knowledge.

How can the right data annotation partner improve AI model success?

Before you select a partner, you must ensure that the partner has relevant experience, especially in your domain, and has market credibility. Once you connect with the right partner, you don’t have to worry much. The right partner delivers high-quality and accurate datasets that ensure consistency and scalability. The partner with domain expertise will deliver industry-specific datasets. Having a partner reduces your turnaround and operational costs with advanced tools and automation. Lastly, you can be free from operational work and focus more on your core tasks.